CELU¶

-

class

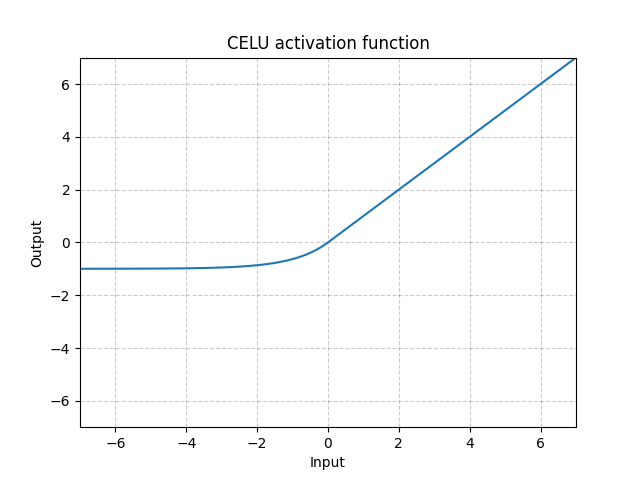

torch.nn.CELU(alpha=1.0, inplace=False)[source]¶ Applies the element-wise function:

More details can be found in the paper Continuously Differentiable Exponential Linear Units .

- Parameters

alpha – the value for the CELU formulation. Default: 1.0

inplace – can optionally do the operation in-place. Default:

False

- Shape:

Input: where * means, any number of additional dimensions

Output: , same shape as the input

Examples:

>>> m = nn.CELU() >>> input = torch.randn(2) >>> output = m(input)